This post is written by ChatGPT.

—————————————-

Kidding! It’s written by me, Aliasgher. But how did it make you feel when you read the first line? Did you want to just ignore what was written, or were you interested in seeing what this post was about? I bet the true answer was somewhere in the middle, at least with the people in my circles, who seem to be tired of reading AI-generated messages. Some find it truly humorous that people are copy/pasting straight from their AI assistant and sharing the response straight to the world. They argue, at least try and edit the post, will you? I think the majority of us are also smart enough to know when a post is written by AI. The style of writing, the additions of emojis in specific locations, the numbered emojis, and the bolded words are all prompts that this was edited or written purely by the AI. So, can we get some originality, please?

Of course, there is a deeper problem here. I don’t think we have truly grasped the true negative impact of AI on our world. Someone once told me that if you want to understand the negative impact of an issue, think of the worst way that tool or idea can be used against humanity. And when you do that with AI, given its training on mostly Western-originated languages and information, you can come up with dark, and I mean really dark, outcomes for this world. It’s one thing asking it for a prompt for a meeting or writing a post for you, but it’s completely different asking it to assist you with how to make a bad intention come to reality. So there is that aspect that needs to be uncovered.

Then there is the other aspect about what reliance on it means for you, as an individual who was previously required to come up with ideas from a blank piece of paper and think about something from scratch? The same idea, now, can be generated within a minute or less with AI. Recently, my wife and I were talking to someone who really admired my father in law (FIL)’s writing when he was young. He said that my FIL had the capability of bringing any idea to life with words. But he then proceeded to say that, now, even he can write the same way with AI-assisted writing. And that brought about a great question – could he (or AI rather) match a human’s writing, and was there no more of a creativity angle that was required? Let’s say we are judging two people who are supposed to write a piece, one writes with AI purel,y and the other does their own homework and relies purely on their skills to write that piece. What metric are we using to judge both of those pieces, because we really can’t judge them in the same way? Or can we? In fact, let me make it even more interesting. Think about a newspaper article that you were captured by recently and what if there was a real possibility, which there is, that the whole piece was written by AI? What if that amazing book that you read recently was written purely by AI?

In a recent Daily Maverick piece, one professor argued about the impact it was having on academia. My wife, who also lectures at a University, really resonated with the arguments in the article. The key issue was the level of reliance on AI to come up with the idea. A university or school essentially trains future professionals of the world who will, in their own way, have to make key decisions that change the landscape of our future world. If those people were now entirely reliant on AI to even think about a problem, what would that mean? Will the future solutions be entirely based on AI-generated information, including its biases?

The brain is a muscle. Like any muscle, it needs to be trained to think and behave in a particular way. For instance, I always find it interesting when people say that they can’t do math. In fact, every 4 out of 5 people that I come across these days tell me that they weren’t born with a math gene. Well, spoiler alert, nobody is born with a math gene; they train it. I will share my own story of learning math. I got an E in math subjects during my first two semesters of an engineering degree. Through hard work and learning key concepts, I made a huge jump to get an A+ in math subjects in my third and fourth semesters. Yes, I was bad at math, but that was because I didn’t train my brain or go through the hard work to make it engage with mathematical concepts. Till today, I benefit from training that muscle. I can handle programmatic budgets easily because of my mathematical capabilities. Similarly, if your brain is over-reliant on AI to generate ideas, it will never be able to gain the skill of thinking that is invaluable, to say the least. Many of the social problems that the world faces today, from climate change to housing challenges, require diverse thinking that goes beyond surface-level problem-solving methods.

Bloomberg had an excellent post about this recently. In the post, the speaker shared that one study has shown people developing an unhealthy reliance on ChatGPT due to not having built an internal compass. This lower level of independence meant that people asked ChatGPT to solve their minute problems and didn’t feel confident in their own decisions. If you have seen indecisiveness before, you know that it can be debilitating. The question then is what this level of dependence on AI will mean to our thinking process if it alone is our sole basis for defining and solving a problem.

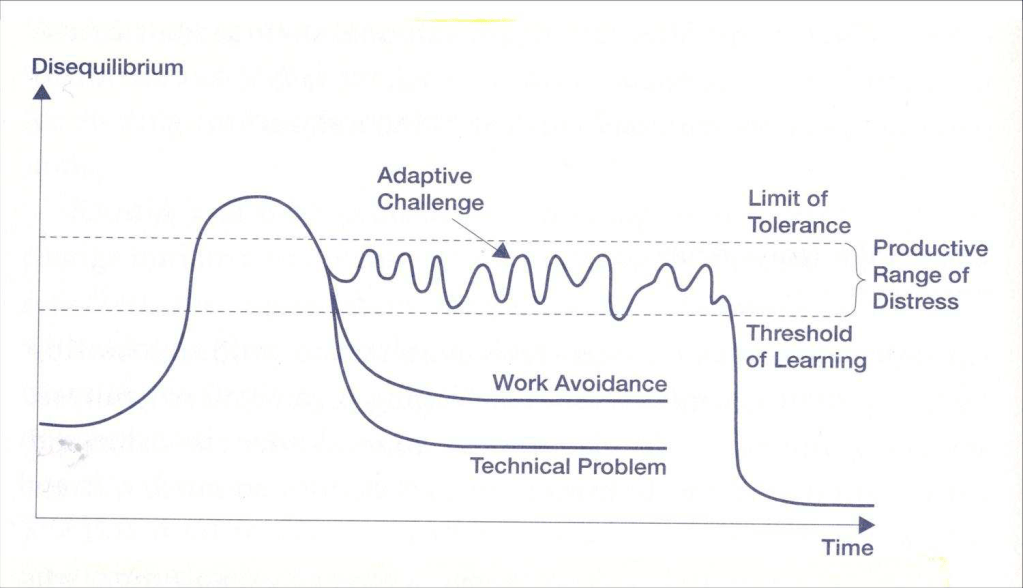

The other layer here is about instant gratification. The immediate need to solve a problem now-now (South African lingo to say that we really mean now). There is an amazing concept of Adaptive leadership. To explain this theory, I will need to first explain a few concepts. Let’s start by defining the two problem types. The first type is a technical problem that has a clear problem definition and a clear solution. The second type is an adaptive problem, whose problem is not clear, and the solution is even more murky. These problems are often referred to as “wicked problems”. We then need to define disequilibrium. Disequilibrium is the state of disruption that is required in any system to reach an adequate solution. With a technical problem, the level of disequilibrium required is quite small because its confines are so simple to understand. An adaptive problem, however, requires the distress levels to be maintained before an adequate solution is reached. Good leadership and diverse thinking can enable this productive range of distress to be maintained for a sufficient period of time so that the solution is adequate to start solving the problem. If you bring disequilibrium down too quickly to an adaptive problem, you are likely to come up with an inadequate solution. There are many of those lying around somewhere. If you raise the disequilibrium level too high for too long, you disrupt the entire system.

Source: Laurent Ledoux (by Alexander Grashow, Marty Linsky & Ronald Heifetz)

My opinion is that ChatGPT brings the disequilibrium down too quickly. It is great as a tool to start exploring an idea. But an adaptive, complex, wicked problem requires one to sit with it while the temperature is high enough. It also means that you build that brain muscle that gets used to sitting with a problem and finding creative solutions that are embedded in lived experiences rather than generated through inadequate data that AI is trained on. Over-reliance on it means that your brain is trained on getting a solution today and using technical solutions to adaptive problems.

There is an inaccuracy aspect to AI, too. Ask it to generate references to its information, and it fails miserably. In some cases, I have asked it to summarise a report, and the information it extracted was completely inaccurate. When I called it out, it apologised, before giving me the same incorrect information, reworded. In a world where misinformation and disinformation are rife, what does that say about AI that is generating information that it can’t reference accurately?

AI is not all that bad, though. Used in the right setting, in the right space, it can be an invaluable tool to support people’s work. I, for example, rely on it to support me when publishing on GitHub and other popular open tools. I would give it a prompt on turning my writing towards the format that is applicable to GitHub markdown, and it works like a charm. It is immensely helpful in that manner.

But, on behalf of every sane human alive, please, can we not use it for every little thing so that creativity and decisiveness can stay alive?

Bonus piece written by AI: I asked ChatGPT to write this piece on its own and the below piece was purely generated by it. Please feel free to compare it to my piece.

Is ChatGPT Making You Lazy?

In an era where AI assistants can draft emails, write code, and even compose poetry at the click of a button, it’s time to ask ourselves a pressing question: Is ChatGPT making us lazy?

The concern isn’t unfounded. When we can outsource our thinking to an algorithm, the temptation to skip the mental heavy lifting becomes real. Need to write a report? ChatGPT can generate a draft in seconds. Struggling with a math problem? AI can solve it faster than you can sharpen your pencil.

But this simplistic view misses the nuance of how we’re actually integrating these tools into our lives.

Tools vs. Crutches

The distinction between using AI as a tool versus a crutch is critical. Tools amplify our capabilities; crutches replace them. The carpenter with a power drill isn’t considered “lazy” for not using a manual one. Similarly, using ChatGPT to brainstorm ideas or edit your writing doesn’t necessarily make you intellectually complacent.

What matters is how we use these tools. Are we using AI to enhance our thinking or to avoid it altogether?

The Cognitive Outsourcing Dilemma

When we outsource cognitive tasks to AI, we face a choice: Do we use the freed-up mental bandwidth for higher-order thinking, or do we simply consume more passive entertainment?

The danger lies not in using AI assistance but in the potential atrophy of skills we no longer practice. Writing skills, critical analysis, and creative problem-solving require consistent exercise to remain sharp.

Finding Balance

The most productive approach isn’t rejecting AI tools or surrendering to them completely—it’s finding a middle ground:

- Use AI for initial drafts, then apply your judgment to refine them

- Let AI handle routine tasks while you focus on work that requires human insight

- Treat AI outputs as starting points rather than finished products

The Path Forward

Rather than asking if ChatGPT makes us lazy, perhaps we should ask: How can we use ChatGPT to make us more thoughtful, creative, and effective?

The most successful people won’t be those who rely entirely on AI or those who reject it outright. They’ll be those who learn to collaborate with AI, using it to handle routine tasks while dedicating their uniquely human capabilities to problems that matter most.

In the end, the tool itself isn’t making us lazy—our choices about how to use it are what count. ChatGPT, like any powerful technology, simply amplifies our existing tendencies. The question isn’t whether the technology is changing us, but how we choose to change alongside it.